python新手,程序比对了n遍,没问题啊 结果网址出现乱码

调度器

#coding:utf-8

from baike_spiter import url_manger, html_download, html_paser, html_outputer

class Spitermain(object):

#初始化爬虫

def __init__(self):

# url管理器

self.urls = url_manger.UrlManger()

# 下载器

self.downloader = html_download.HtmlDownload()

# 解 析器

self.parser = html_paser.HtmlPaser()

# 输出器

self.outputer = html_outputer.HtmlOutputer()

# craw方法表示爬虫的调度程序

def craw(self, root_url):

count = 1

# 将入口url添加进url管理器

self.urls.add_new_url(root_url)

while self.urls.has_new_url():

try:

new_url = self.urls.get_new_url()

print ("craw %d :%s"% (count, new_url))

html_cont = self.downloader.download(new_url)

new_urls, new_data = self.parser.parse(new_url, html_cont)

self.urls.add_new_urls(new_urls)

self.outputer.collect_data(new_data)

if count == 10:

break

count = count + 1

except:

print "craw failed"

self.outputer.output_html()

if __name__=="__main__":

root_url = "http://baike.baidu.com/item/Python"

obj_spiter = Spitermain()

obj_spiter.craw(root_url)

url管理器

#encoding=utf-8

class UrlManger(object):

def __init__(self):

self.new_urls=set() #待爬的url集合

self.old_urls=set() #已经爬取的url集合

def add_new_url(self,url):

if url is None:

return

if url not in self.new_urls and url not in self.old_urls:

self.new_urls.add(url)

return

def add_new_urls(self,urls):

if urls is None or len(urls) == 0:

return

for url in urls:

self.add_new_url(url)

def has_new_url(self):

return len(self.new_urls)!=0

def get_new_url(self):

new_url=self.new_urls.pop() #获取url,并从集合中剔除url

self.old_urls.add(new_url)

return new_url

下载器

import urllib2

class HtmlDownload(object):

def download(self,url):

if url is None:

return None

response = urllib2.urlopen(url)

if response.getcode() != 200:

return None

return response.read()

#coding:utf-8

import urlparse

import bs4

import re

解析器

class HtmlPaser(object):

def parse(self, page_url, html_cont):

if page_url is None or html_cont is None:

return

soup = bs4.BeautifulSoup(html_cont, 'html.parser', from_encoding='utf-8')

new_urls=self._get_new_urls(page_url,soup)

new_data=self._get_new_data(page_url,soup)

return new_urls , new_data

def _get_new_urls(self, page_url, soup):

# 新的带爬取的url集合

new_urls=set()

#/view/123.htm

links=soup.find_all('a',href=re.compile(r"/item/"))

for link in links:

new_url=link['href']

#urljoin 的作用是将newurl按照page-url 的格式创建全新的url

new_full_url=urlparse.urljoin(page_url,new_url)

new_urls.add(new_full_url)

return new_urls

def _get_new_data(self, page_url, soup):

res_data={}

#url

res_data['url']=page_url

#<dd class="lemmaWgt-lemmaTitle-title"><h1>Python</h1>

title_node=soup.find('dd',class_="lemmaWgt-lemmaTitle-title").find('h1')

res_data['title']=title_node.get_text()

#<div class="lemma-summary" label-module="lemmaSummary">

summary_node=soup.find('div',class_="lemma-summary")

res_data['summary']=summary_node.get_text()

return res_data

输出器

class HtmlOutputer(object):

def __init__(self):

self.datas=[]

def collect_data(self, new_data):

if data is None:

return

self.datas.append(new_data)

def output_html(self):

fout=open("output.html",'w',encoding='utf-8')

fout.write("<html>")

fout.write("<body>")

fout.write("<table>")

for data in self.datas:

fout.write("<tr>")

fout.write("<td>%s</td>"%data['url'])

fout.write("<td>%s</td>" % data['title'].encode('utf-8'))

fout.write("<td>%s</td>" % data['summary'].encode('utf-8'))

fout.write("</tr>")

fout.write("</table>")

fout.write("</body>")

fout.write("</html>")

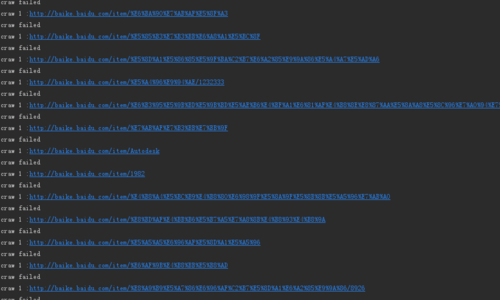

结果 并且打开创建的文件,上面无任何信息

并且打开创建的文件,上面无任何信息