主成分分析,即Principal Component Analysis(PCA),是多元统计中的重要内容,也广泛应用于机器学习和其它领域。它的主要作用是对高维数据进行降维。PCA把原先的n个特征用数目更少的k个特征取代,新特征是旧特征的线性组合,这些线性组合最大化样本方差,尽量使新的k个特征互不相关。关于PCA的更多介绍,请参考:https://en.wikipedia.org/wiki/Principal_component_analysis.

PCA的主要算法如下:

组织数据形式,以便于模型使用;

计算样本每个特征的平均值;

每个样本数据减去该特征的平均值(归一化处理);

求协方差矩阵;

找到协方差矩阵的特征值和特征向量;

对特征值和特征向量重新排列(特征值从大到小排列);

对特征值求取累计贡献率;

对累计贡献率按照某个特定比例,选取特征向量集的字迹合;

对原始数据(第三步后)。

其中协方差矩阵的分解可以通过按对称矩阵的特征向量来,也可以通过分解矩阵的SVD来实现,而在Scikit-learn中,也是采用SVD来实现PCA算法的。关于SVD的介绍及其原理,可以参考:矩阵的奇异值分解(SVD)(理论)。

本文将用三种方法来实现PCA算法,一种是原始算法,即上面所描述的算法过程,具体的计算方法和过程,可以参考:A tutorial on Principal Components Analysis, Lindsay I Smith. 一种是带SVD的原始算法,在Python的Numpy模块中已经实现了SVD算法,并且将特征值从大从小排列,省去了对特征值和特征向量重新排列这一步。最后一种方法是用Python的Scikit-learn模块实现的PCA类直接进行计算,来验证前面两种方法的正确性。

用以上三种方法来实现PCA的完整的Python如下:

import numpy as npfrom sklearn.decomposition import PCAimport sys#returns choosing how many main factorsdef index_lst(lst, component=0, rate=0):

#component: numbers of main factors

#rate: rate of sum(main factors)/sum(all factors)

#rate range suggest: (0.8,1)

#if you choose rate parameter, return index = 0 or less than len(lst)

if component and rate:

print('Component and rate must choose only one!')

sys.exit(0) if not component and not rate:

print('Invalid parameter for numbers of components!')

sys.exit(0) elif component:

print('Choosing by component, components are %s......'%component) return component else:

print('Choosing by rate, rate is %s ......'%rate) for i in range(1, len(lst)): if sum(lst[:i])/sum(lst) >= rate: return i return 0def main():

# test data

mat = [[-1,-1,0,2,1],[2,0,0,-1,-1],[2,0,1,1,0]]

# simple transform of test data

Mat = np.array(mat, dtype='float64')

print('Before PCA transforMation, data is:\n', Mat)

print('\nMethod 1: PCA by original algorithm:')

p,n = np.shape(Mat) # shape of Mat

t = np.mean(Mat, 0) # mean of each column

# substract the mean of each column

for i in range(p): for j in range(n):

Mat[i,j] = float(Mat[i,j]-t[j])

# covariance Matrix

cov_Mat = np.dot(Mat.T, Mat)/(p-1)

# PCA by original algorithm

# eigvalues and eigenvectors of covariance Matrix with eigvalues descending

U,V = np.linalg.eigh(cov_Mat)

# Rearrange the eigenvectors and eigenvalues

U = U[::-1] for i in range(n):

V[i,:] = V[i,:][::-1] # choose eigenvalue by component or rate, not both of them euqal to 0

Index = index_lst(U, component=2) # choose how many main factors

if Index:

v = V[:,:Index] # subset of Unitary matrix

else: # improper rate choice may return Index=0

print('Invalid rate choice.\nPlease adjust the rate.')

print('Rate distribute follows:')

print([sum(U[:i])/sum(U) for i in range(1, len(U)+1)])

sys.exit(0) # data transformation

T1 = np.dot(Mat, v) # print the transformed data

print('We choose %d main factors.'%Index)

print('After PCA transformation, data becomes:\n',T1)

# PCA by original algorithm using SVD

print('\nMethod 2: PCA by original algorithm using SVD:') # u: Unitary matrix, eigenvectors in columns

# d: list of the singular values, sorted in descending order

u,d,v = np.linalg.svd(cov_Mat)

Index = index_lst(d, rate=0.95) # choose how many main factors

T2 = np.dot(Mat, u[:,:Index]) # transformed data

print('We choose %d main factors.'%Index)

print('After PCA transformation, data becomes:\n',T2)

# PCA by Scikit-learn

pca = PCA(n_components=2) # n_components can be integer or float in (0,1)

pca.fit(mat) # fit the model

print('\nMethod 3: PCA by Scikit-learn:')

print('After PCA transformation, data becomes:')

print(pca.fit_transform(mat)) # transformed data

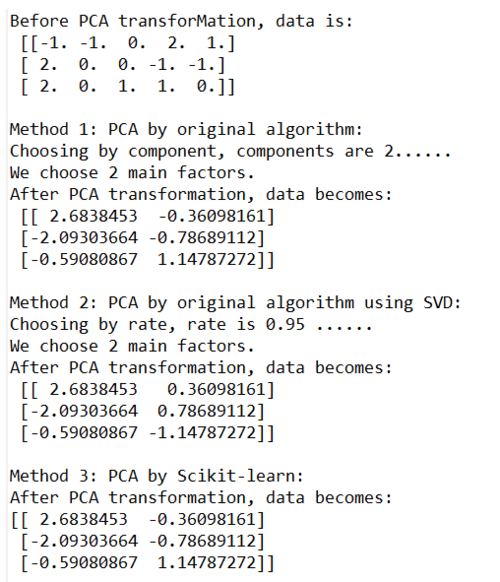

main()运行以上代码,输出结果为:

Eclipse运行结果

这说明用以上三种方法来实现PCA都是可行的。这样我们就能理解PCA的具体实现过程啦~~

有兴趣的读者可以用其它语言实现一下哈。

参考文献:

PCA 维基百科: https://en.wikipedia.org/wiki/Principal_component_analysis.

讲解详细又全面的PCA教程: A tutorial on Principal Components Analysis, Lindsay I Smith.

博客:矩阵的奇异值分解(SVD)(理论):http://www.cnblogs.com/jclian91/p/8022426.html.

博客:主成分分析PCA: https://www.cnblogs.com/zhangchaoyang/articles/2222048.html.

Scikit-learn的PCA介绍:http://scikit-learn.org/stable/modules/generated/sklearn.decomposition.PCA.html.

作者:但盼风雨来_jc

链接:https://www.jianshu.com/p/9cf498a1ab56

共同学习,写下你的评论

评论加载中...

作者其他优质文章